Google just dropped a new family of open AI models called Gemma 4. The company says these models are smarter than previous versions while using less computing power.

Since Google first launched its Gemma LLM models, people have downloaded the models over 400 million times. The community has also created more than 100,000 different variations to make it run for smaller systems. Now with Gemma 4, Google wants to help developers build AI that can think through problems step by step and work with outside tools.

Google released four versions of Gemma 4. Two are smaller and designed for phones and other gadgets: the Effective 2B and Effective 4B. Two are larger and more powerful: a 26B model and a 31B model.

The bigger models are already getting good reviews. The 31B version ranks third on a popular industry leaderboard called Arena AI. The 26B version ranks sixth. Google claims these models beat others that are 20 times larger.

The smaller Gemma 4 models focus on running well on mobile devices. They work completely offline without needing internet connection. Google teamed up with its own Pixel phone team, plus chipmakers Qualcomm and MediaTek, to make this happen.

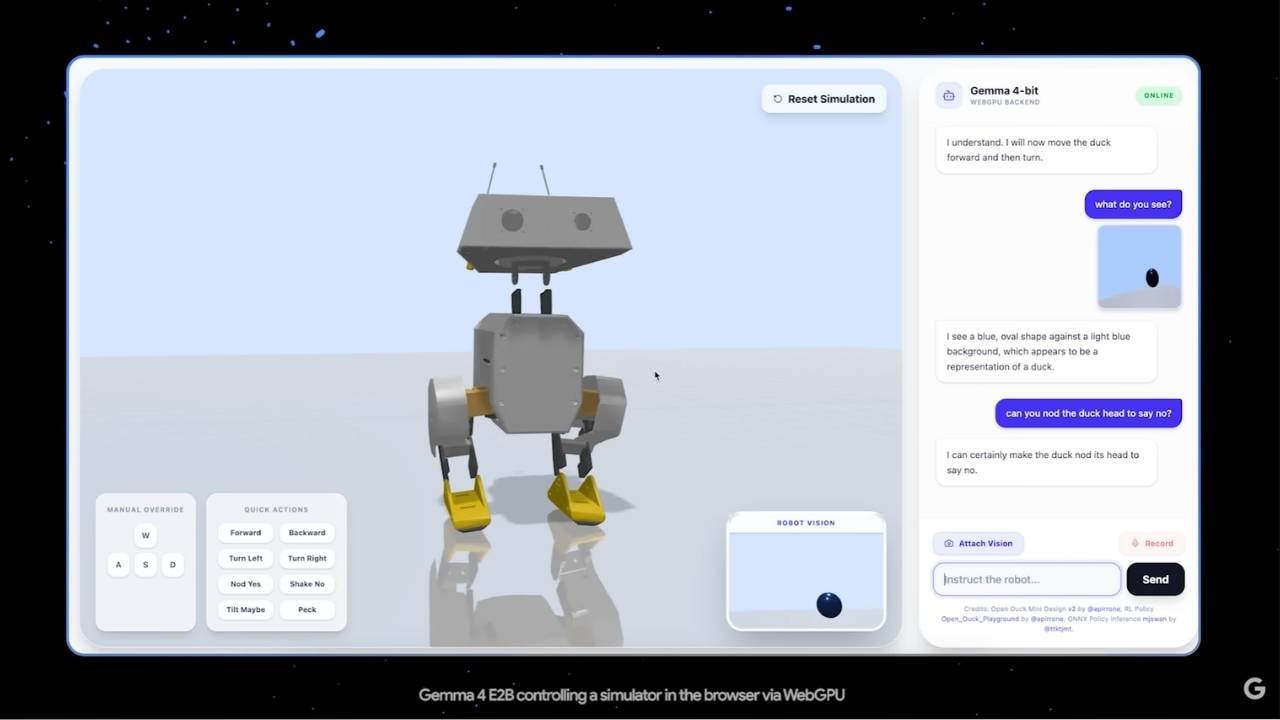

All four models can handle images and video. They can read text from pictures and understand charts. Even the two smallest models can also take voice input.

Developers can use these models to build AI that calls other tools or works with different apps. The models can handle long documents too. The smaller ones manage about 128,000 tokens worth of context, while the larger ones handle up to 256,000.

The models understand more than 140 languages. They also do better on math tests and following instructions compared to older versions.

The two larger Gemma 4 models can run on a single NVIDIA H100 graphics card. There are also compressed versions that work on regular gaming computer graphics cards. The 26B model is built to be fast because it only uses about 3.8 billion parameters.

The smaller models are even lighter. They only use 2 billion and 4 billion active parameters during operation, which keeps phones from overheating or draining battery quickly.

You can try Gemma 4 right now through Google AI Studio for the larger models, or through Google AI Edge Gallery for the smaller ones. The model files are available on Hugging Face, Kaggle, and Ollama.

Many popular AI tools already work with Gemma 4, including llama.cpp, Ollama, and LM Studio. Android developers can also use these models with Google’s AICore tools.